How to test and learn?

Sarah Dyer is Manchester’s Associate Vice-President for Teaching Excellence and Innovation. She is a social scientist whose disciplinary research has explored work, expertise, care, and ethics. Drawing on these interests, her scholarly work has focused on courage and compassion in teaching and learning, partner-enabled learning, and human-centred design in HE. She based in the Manchester Institute of Education.

Iria Lopez specialises in Human-Centered Design and Service Design and works as an independent consultant. She has worked in design and innovation for 20 years, focusing on Higher Education over the past five years. She led the introduction of design sprints for curriculum development at the University of Leeds for three years and has collaborated with King’s College London and London Business School. Previously, she worked in digital transformation for government, local councils, and international development agencies. You can find Iria on linkedin.

How to test and learn?

“We will make it normal to test, learn and adapt openly.”

The Manchester University 2035 Strategy Delivery Handbook

Given that we are used to experimenting and reviewing evidence in universities, the intention to make it normal to ‘test, learn, and adapt’ may not feel particularly radical. We have familiar and practiced approaches to review and improve what we do: degree programmes, assessments, services, and students’ learning experience. Annual review cycles, surveys, and committees are the rhythm of the academic year. Piloting innovations is part of institutions’ muscle memory.

But here’s the thing, however familiar ‘test and learn’ seems in principle, however reassuringly recognisable the terms, testing and learning through a human-centred design (HCD) lens is mostly unfamiliar in practice. It requires adapting how we work; adopting new approaches and indeed mindsets. In this blog we explore the difference between how ‘test and learn’ is traditionally understood in Higher Education and what it would look like with a human-centred design framing. We begin by outlining how HCD ‘test and learn’ differs from our familiar ways of working. We then introduce a tool which may help support this new way of working, providing a worked example from our own work.

What is human-centred design test and learn?

Traditionally, universities run pilots once a proposal is largely defined. For example, a new course unit, skills framework, or assessment approach is trialed with one cohort for an academic year. Often these pilots are run in areas where there is an enthusiasm to be involved, rather those which are somehow representative. These pilots usually involve significant investment of time and resources and are often treated as near-final versions of the initiative. At this point, there is a lot at stake.

In human-centred design, ‘test and learn’ comes before any pilot and is a strategy to de-risk innovation. Rather than piloting the whole initiative, we first test and adapt key elements of it through small, low-risk activities. These tests might last two weeks or just an afternoon. As an example, let’s imagine we want to develop students’ ability to articulate the skills they are learning. We have seen others provide students with a skills audit framework to use. Any initiative requires students to understand a list of skills. An appropriate ‘test’ would be of what these students’ understand when presented with a list of skills. To test that, we could conduct a quick feedback session, with only three or four students, asking:

“Can you explain to me what you understand from this list of skills? How would you explain this list of skills to a friend of yours?”

While someone teaching these students might have a sense of what they are likely to say, explicitly asking outside of the context of actual teaching means these assumptions can be tested. The responses enable an adjustment to the content of a skills audit or even to assess if it makes sense to distribute such document at all. Such a test also supports wider adoption of successful innovations. Because the assumptions are made explicit and empirically tested in context, these assumptions can be reviewed and tested in any new context too.

Once the different elements of the planned initiative have been tested, then a pilot provides wider representation. This means that quick ‘test and learn’ rounds do not replace the more traditional pilots, but instead help us to ensure pilots have the best chance of being successful. Moreover, when we pilot without previously gathering feedback on the elements that constitute the pilot, it is harder to understand what caused a pilot to struggle as many variables have introduced at once. Smaller contained tests can help surface risks earlier and more clearly.

Where can we apply a quick ‘test and learn’ approach?

Human-Centred Design is a set of tools and methods. It is also a mindset that values experimentation and learning and a ‘muscle’ that gets stronger with practice. It’s a more experimental way of working that can be applied as much to how meetings are run, how decisions are made, how staff and students collaborate, as to ‘Innovation’. We can test: a different agenda structure, an asynchronous activity before a meeting, the different use of space or facilitation techniques, or a new way of working together after the meeting. These are all great places for ‘testing and learning’.

A tool to support ‘test and learn’

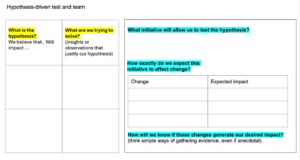

Highlighting ‘test and learn’ approaches as quick and low stakes risks them being undertaken without real clarity about what is being tested. ‘Quick’ can easily be misinterpreted as without attention to detail or without a clear method. To mitigate these risks, in the Student Change Lab, we have been using hypothesis-driven test and learn. When we use the word hypothesis here, we mean our assumptions about what we might do differently to change outcomes. By making these assumptions and our rationale explicit, it helps us to more intentionally define the tests we will run.

We have been using this hypotheses template to help define the tests we have been running in the Student Change Lab. We are sharing it as a tool to help you guide others’ thinking. And we would love to hear whether it is useful for others.

Members of the University can download the template here.

We would like to share an example of using this tool applied to how we collaborate with student representatives.

A worked example

The example below comes from our work in The Student Change Lab, where we have been experimenting with using human-centred design approaches with student academic reps.

What is our hypothesis?

We developed these hypotheses after discovery research where we spoke to those involved in Student Voice/ Staff Student Liaison Committees, academic reps, the Students Union, PS staff, and academics, as well as attending meetings to observe.

|

What is the hypothesis? |

What are we trying to solve? (insights or observations that justify our hypothesis) |

|

We believe that supporting student academic reps to run participatory whole cohort sessions using human-centered design tools (such as journey mapping), will increase student engagement because these are participatory tools. |

Academic reps tell us that they sometimes find it hard to engage their peers when they are gathering feedback and that they often get quite low responses to surveys they create. |

|

We believe that the use of HCD tools will make it easier to interpret root causes, making it easier for committees to understand the problem we are trying to address |

In our observation of Staff Student Liaison/Student Voice committees often spend a long time interpreting feedback that is provided via a survey |

What initiative will allow us to test the hypothesis?

Our ‘test and learn’ involves working with one year of one programme in two whole cohort timetabled sessions. The experiment was designed with the years’ student academic reps, the programme director, and the academic year manager. The student academic representatives receive training to introduce journey mapping and light touch training on how to facilitate their peers creating journey maps in small groups. We then ask them to use journey mapping on their own to collect feedback and present the output to their Student Voice Meeting.

How exactly do we expect this initiative to affect change?

We expect that the training will enable reps to run participatory feedback events:

|

Change |

Expected impact |

|

From individualised to whole cohort feedback opportunities. |

Feedback given represents more diverse experiences. |

|

From feedback collected using surveys, to feedback collected using journey mapping. |

Feedback covers a narrative with a story that help us make sense of why the students had a particular experience. |

|

From survey analysis based only on summarising findings, to journey mapping analysis based on group discussions. |

Analysis process encourages reflection to improve depth and how actionable feedback is. |

How will we know if those changes generate our desired impact?

The ‘test’ only leads to learning through light touch but systematic and evidence-based evaluation. In this case the evidence includes the views of those involved in the experiment and the project team’s reflections.

Simple indicators might include:

- Asking student academic reps whether the training has made them feel confident enough to run whole cohort mapping sessions

- Noticing who contributes to whole cohort session

- Asking students about the experience of being part of the whole cohort session.

- Asking academic reps and programme teams about any change in the diversity and actionability of the feedback collected.

After the trial, structured reflection questions help decide what to do next:

- What has changed compared to before?

- Who was most affected, and how?

- What should we keep, adapt, or drop next time?

What this way of working enables

Human-Centred Design encourages these small ‘test and learn’ trials before large pilots, supported by an explicit hypothesis. These trials are best understood as questions acted out in real life, not decisions that have been locked in. This approach aligns closely with the Manchester 2035 commitment to “test, learn and adapt openly”. Testing here does not mean slowing things down or adding bureaucracy. It means reducing risk by learning early.

Crucially we need to develop ways to capture and share our learning at scale across the size and complexity of our university. The Manchester 2035 Delivery Handbook highlights the importance of capturing and sharing learning explicitly:

“Short summaries will be captured and shared with colleagues and communities of practice. Successful prototypes and lessons from those that didn’t work will be visible to others.”

Using simple templates—or variations of them—helps make this learning visible across the University. In practice sharing learning means clearer reasoning behind decisions, shared understanding of what ‘effective’ means, the ability to try changes without over-committing resources, adaptation to local contexts rather than one-size-fits-all solutions, normalising reflection and adjustment as part of delivery. Manchester’s 2035 ambition requires us to see adjustment as success, not failure. Experimentation is not about uncertainty or lack of direction. It is about being honest about what we do not yet know, and choosing to learn deliberately rather than by accident. Crucially, it shifts the question from: “Did this work or fail?” to “What did we learn, and what should we try next?”

0 Comments